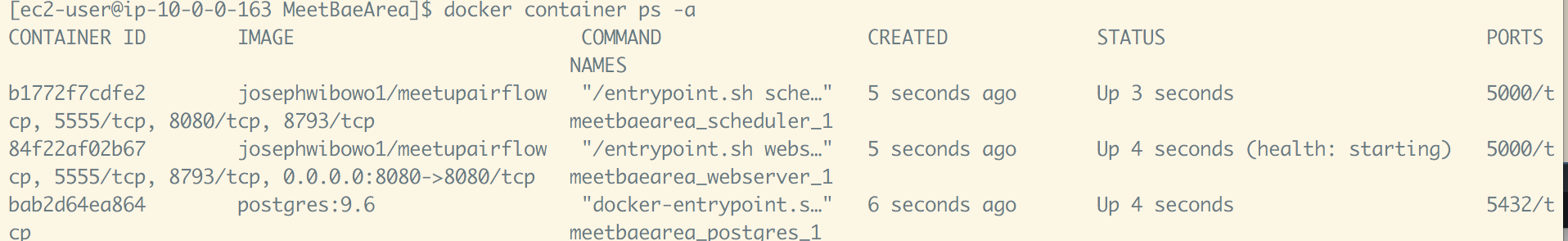

FROM puckel/docker-airflow:1.10.6 RUN pip install -user psycopg2-binary ENV AIRFLOWHOME/usr. This tutorial uses the ipykernel package to run the kernel, but there are other options available such as the jupyter package. Training_model_tasks > choosing_best_model > Īm I missing anything here? Please let me know if you can. Inside of the Airflow UI, I see a ton of these message variations. The PapermillOperator is designed to run a notebook locally, so you need to supply a kernel engine for your Airflow environment to execute the notebook code. I worked around by submitting the job from the airflow container to other components the hard ways. However, when I shifted this project, I had limited knowledge of modifying the docker container and configure the Hadoop components. Test: "]įrom import SubDagOperatorįrom import PythonOperator, BranchPythonOperatorįrom import BashOperatorĬhoosing_best_model = BranchPythonOperator( But you have to install all those components inside the airflow docker first to activate this feature. config/airflow.cfg:/usr/local/airflow/airflow.cfg FERNET_KEY=46BKJoQYlPPOexq0OhDZnIlNepKFf87WFwLbfzqDDho= Here is how my Dockerfile, docker-compose.yml and dag.py looks like:ĭockerfile: FROM puckel/docker-airflow:latest We are using Airflow to schedule our jobs on EMR and currently we want to use apache Livy to submit Spark jobs via Airflow I need more guidance on below : Which Airflow-Livy operator we should use for python 3+ pyspark and scala jobs. I'm even writing pip command to install apache-airflow in my Dockerfile. I can access Airflow UI through localhost:8080 but without executing the DAG and the error mentioned in the subject above. I'm running airflow inside docker container and getting airflow image (puckel/docker-airflow:latest) from docker hub.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed